Measuring Student Growth In India

Posted: January 13th, 2015 | Author: Michael Goldstein | | 2 Comments »

Michael Dell made a fortune on computers. He and his wife Susan set up a foundation, MSDF, to give away a ton of dough to ed reform causes.

They just released a report. It’s about Assessment in India. Good stuff.

MSDF has donated more than $130 million in India. But do their investments pay off for

children? That’s what they wanted to better understand.

Specifically: How can they measure student growth? Because growth is what matters. See here.

In the USA, over the past few years, state governments have taken this on. They set up good measurements of student growth. That data has been a boon for all sorts of analysts, to judge “what works and what doesn’t.” For example, the average charter school does not generate much student growth compared to traditional schools. However, there is a particular segment of charters that generates huge growth for kids, often called “No Excuses” schools.

Anyway, growth metrics in the USA face political opposition. For one thing, annual growth measurements allows individual teachers, strong and weak, to be identified. The status quo (99%+ of US teachers rated “satisfactory”) has appeal in certain quarters. So there is an unusual alliance brewing in the US, where traditional political enemies — teachers unions and Republicans — may be joining forces in 2015 to block the collection of annual growth data. But I digress. Back to India.

So a foundation like MSDF, which in its USA reform work does not have to invent its own set of assessments, does have to in the India context. Or they can’t easily learn as an organization.

They set up a common test — for Grade 3 5 7 9 in English and Math, with a baseline and endline each year. If you’re a grantee, you must participate (if I’m reading the report correctly).

As a result, they learn about “What works.”

For example, imagine Grantee 7 and Grantee 1. In absolute terms, Grantee 7 kids score 545 on an exam, and Grantee 1 scores 515. Grantee 7 is better. Duh.

Wait a minute.

With the new “Growth” data, MSDF learns Grantee 1 is making large gains. 460 to 515. Grantee 7 very small gains. 535 to 545.

Kaboom! Measured by Growth, Grantee 1 is doing some great things. Grantee 7 is not. Grantee 7 needs to step it up, or (presumably) MSDF would withdraw support after some years (getting some sort of chance to change its approach).

At Bridge, we see things the same way as MSDF. With our “Academic” hats on, we care only about Growth.

Now that’s not the only “hat” we have. Our parents care about Absolute Scores. That is true everywhere, from Kenya to India to Americans in wealthy suburbs. Parents all mistake “high absolute scores” as a proxy for “good schools.” The whole notion of Student Growth is a pretty new concept. It will take time for this concept to become sticky with parents, if it ever does. And since we care about our parents at Bridge, we care about what they care about.

Now back to MSDF. A couple anecdotes:

*They learned their particular grantees doing Academic Coaching to teachers (after school) seem to have high gains for math (for kids). I had the opportunity to talk some months ago to one of their staffers about this work, and I’d like to learn even more.

*One grantee, KEF which works on leadership training, was getting low growth. One cause: these schools weren’t particularly good at teaching basic skills. Moreover, their previous math test didn’t assess these “simple skills” very well. So they got a new test; then they changed leadership training to help school headmasters push a focus on these skills, and how to use data to improve this type of instruction. Evidently, growth went up. That’s a victory for the kids they serve.

The KEF example reminds me of issues I faced at Match Charter Schools back in Boston. The Massachusetts state exam doesn’t test simple fact recall like “4 times 7” for 3rd graders (the first age at which kids are tested). They prefer to measure “higher order skill.” Of course “higher order skill” is tough to do if you can’t do the easy stuff. See here.

By contrast, at Bridge we use a test called EGMA that was commissioned by the US Government (USAID). It does start with the (appropriate) easy stuff, like counting, and moves up from there. I actually think EGMA would be valuable in the USA context. Oh well.

My only quibble with the MSDF report was this. (But I may be reading it wrong).

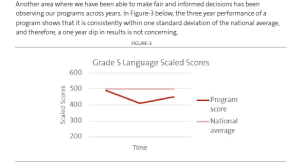

If the dip from 500 to 405 is actually anywhere close to one standard deviation, and then the rebound looks to be something like 405 to 455, that’s still very concerning! A half standard deviation worse off in language is quite large. But who knows what the full story is here; there’s nothing in the report besides that graphic.

Anyway, if you dig ed reform in the developing world, it’s worth reading the report.

The director, Janet Mountain, writes:

We’ve learned again and again and in all the fields we’ve worked in that you can’t manage (much less improve upon) what you can’t accurately measure. No one can. So, for the last seven years, our India team has worked to devise a reliable measurement system that helps us better manage our work.

We hope it also explains both why such a framework matters and how it can enable transformation within the classroom, throughout schools, and across cities and even states.

I 100% agree. My fondest technocratic wish for Kenyan (and soon Ugandan and Nigerian, and Indian in 2016) education policy is that they’d follow the recommendation of Peter Musyoka and measure student growth. It’s the only way to discover “what works.”

You may be interested in this post on comparing test scores. Sounds like it might not apply in Bridge’s case if you are using the same items across schools, but still interesting:

http://blogs.worldbank.org/impactevaluations/how-standard-standard-deviation-cautionary-note-using-sds-compare-across-impact-evaluations

Thanks Ryan. Great blog post.